I’ve long believed that modern hip-hop and R&B suffers from too much post-production. Average singers can wail away in front of the microphone yet sound amazing on the radio, after the post-production techs have fixed all the mistakes. This is why all the music on the radio sounds so similar – it’s all pitch-perfect.

Today, I discovered just how little talent it requires to make a modern pop song.

MacBook Pro + Built-in Mic + GarageBand + AutoTune + 1 hour =

|

VS. |

|

Go ahead and click PLAY above to give my cover song a listen.

AutoTune

The signature vocal effect that you hear is produced by an audio processor called AutoTune (read more about it on Wikipedia). AutoTune is used by the music industry to correct pitch in vocal and instrumental performances. Essentially, it allows artists to hide their mistakes.

AutoTune works by analyzing a music track’s pitches over time. It compares every note’s pitch to the musical key of the audio track (you have to tell it what key your audio track is in). Any time AutoTune sees a note that is off-key, it corrects the note by shifting it up or down to the nearest note.

However, the plugin does much more than pitch correction. It can be also used to deliberately distort vocals. When you crank up the settings to the max, you get the famous T-Pain vocal effect. ** **

Sidenote: T-Pain wasn’t actually the first artist to use AutoTune for all his vocals, although he is certainly the most famous. AutoTune was first used by Eiffel 65 for their 1999 hit single Blue Da-Ba-Dee. (I loved this song as a kid. :-))

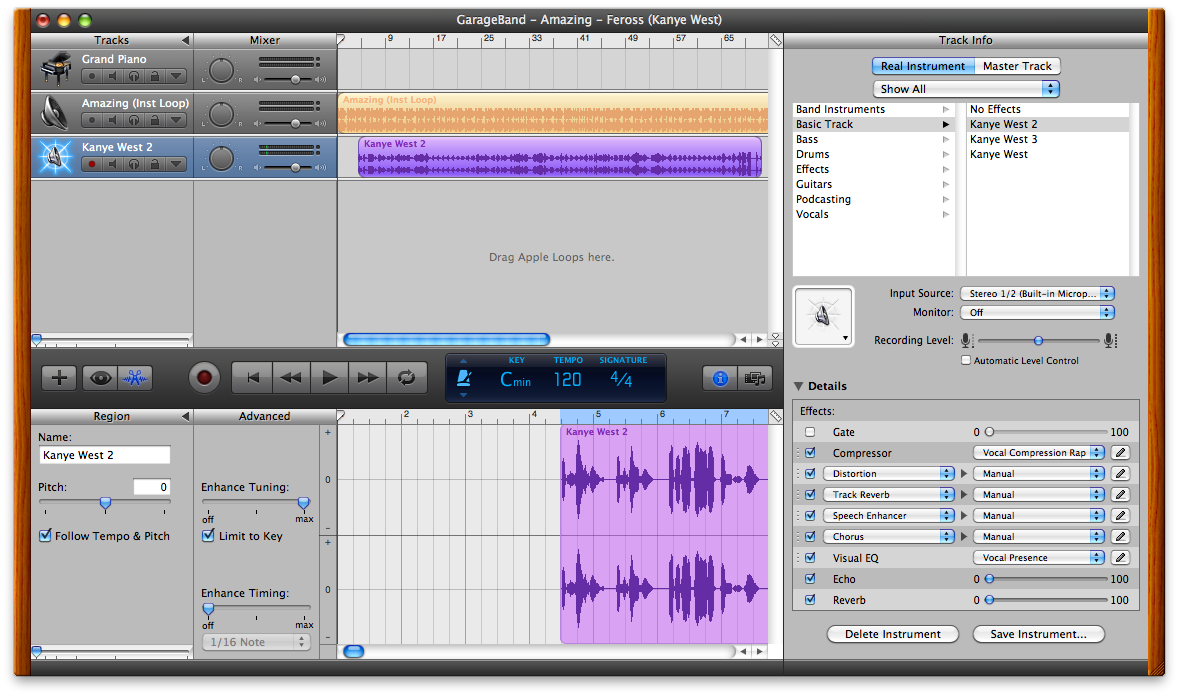

These are the Auto-Tune settings in Garageband to make the Kanye West & T-Pain audio effect:

Keep in mind: I am not a singer and I don’t claim to have any natural singing talent. Sometimes I sing in the shower, and I did karaoke one time in high school with some friends. That is the full extent of my singing experience.

Yet, I was able to produce a decent-sounding R&B vocal track. This experiment proves, to me anyway, that anyone with average singing talent can produce a passable modern R&B song.

You should also read/watch:

- Auto-Tune: Why Pop Music Sounds Perfect (Time Magazine)

- Auto-Tune Abuse in Pop Music - 10 Examples (Hometracked)

- Smoking Lettuce: Auto Tune the News #5 (YouTube)

I hope you enjoyed my song! Leave a comment!

P.S. This is my 50th blog post! I’m honestly surprised that I’ve kept it up for this long. Thanks to everyone who has taken the time to read my ramblings, leave a comment, and make this blog a fun project for me! It’s been really fun – here’s to the next 50 posts! :-D

UPDATE (09/23/2009)

A reader (titanicfreak94) sent me this message:

It’s a very common misconception that Eiffel 65 used the Autotune to create the distorted vocals of Blue, and all their other songs. The actual process they did was called Vocoding. They used a device called a Vocoder, which takes one audio signal, and morphs it to give the effects of another. For example, if they had a clip of somebody singing, they could use the vocoder to mix the voice with a synthesized sound, and the result would be a robotic-sounding voice.</blockquote>

I double-checked and s/he’s most certainly right. The song’s distorted vocals were indeed composed using a http://en.wikipedia.org/wiki/Vocoder. Thanks mysterious tipster!

(If you liked this, you might like Travels in Japan.)